flowchart RL

A("Model:

Substantive Theory") --> |Hypothesized Causal Process|B("Observed Outcomes

(any format)")

Lecture 06: Generalized Linear Models (Binary Outcome) and Matrix Algebra

Generalized Linear Models

Educational Statistics and Research Methods (ESRM) Program*

University of Arkansas

2024-09-24

Today’s Class

- Introduction to Generalized Linear Models

- Expanding your linear models knowledge to models for outcomes that are not conditionally normally distributed

- An example of generalized models for binary data using logistic regression

- Matrix Algebra

An Introduction to Generalized Linear Models

Categories of Multivariate Models

Statistical models can be broadly organized as:

- General (normal outcome) vs. Generalized (not normal outcome)

- One dimension of sampling (one variance term per outcome) vs. multiple dimensions of sampling (multiple variance terms)

- Fixed effects only vs. Mixed effects (fixed and random effects = multilevel)

All models have fixed effects, and then:

- General Linear Models (GLM): conditionally normal distribution of data, fixed effects and no random effects

- General Linear Mixed Models (GLMM): conditionally normal distribution for data, fixed and random effects

- Generalized Linear Models: any conditional distribution for data, fixed effects through link functions, no random effects

- Generalized Linear Mixed Models: any conditional distribution for data, fixed effects through link functions, fixed and random effects

“Linear” means the fixed effects predict the link-transformed DV in a linear combination of

\[ g^{-1}(\beta_0 +\beta_1X_1+ \beta_2X_2 + \cdots) \]

Unpacking the Big Picture

Substantive theory: what guides your study

Hypothetical causal process: what the statistical model is testing (attempting to falsify) when estimated

Observed outcomes: what you collect and evaluate based on your theory

Outcomes can take many forms:

Continuous variables (e.g., time, blood pressure, height)

Categorical variables (e.g., Likert-type response, ordered categories, nominal categories)

Combinations of continuous and categorical (e.g., either 0 or some other continuous number)

The Goal of Generalized Models

Generalized models map the substantive theory onto the sample space of the observed outcomes

- Sample space = type/range/outcomes that are possible

The general idea is that the statistical model will not approximate the outcome well if the assumed distribution is not a good fit to the sample space of the outcome

- If model does not fit the outcome, the findings cannot be trusted

The key to making all of this work is the use of differing statistical distributions for the outcome

Generalized models allow for different distributions for outcomes

- The mean of the distribution is still modeled by the model for the means (the fixed effects)

- The variance of the distribution may or may not be modeled (some distributions don’t have variance terms)

What kind of outcome? Generalized vs. General

Generalized Linear Models \(\rightarrow\) General Linear Models whose residuals follow some not-normal distributions and in which a link transformed Y is predicted instead of the original scale of Y

Many kinds of non-normally distributed outcomes have some kind of generalized linear model to go with them:

- Binary (dichotomous)

- Unordered categorical (nominal)

- Ordered categorical (ordinal) These two are often called “multinomial” inconsistently

- Counts (discrete, positive values)

- Censored (piled up and cut off at one end – left or right)

- Zero-inflated (pile of 0’s, then some distribution after)

- Continuous but skewed data (pile on one end, long tail)

Common distributions and canonical link functions (from Wikipedia)

| Distribution | Support of distribution | Typical uses | Link name | Link function \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=g(\mu )\,\!}\) |

Mean function |

| Normal | real: \({\displaystyle (-\infty ,+\infty )}\) |

Linear-response data | Identity | \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=\mu \,\!}\) |

\({\displaystyle \mu =\mathbf {X} {\boldsymbol {\beta }}\,\!}\) |

| Exponential | real: \({\displaystyle (0,+\infty )}\) |

Exponential-response data, scale parameters | Negative inverse | \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=-\mu ^{-1}\,\!}\) |

\({\displaystyle \mu =-(\mathbf {X} {\boldsymbol {\beta }})^{-1}\,\!}\) |

| Gamma | |||||

| Inverse Gaussian |

real: \({\displaystyle (0,+\infty )}\) |

Inverse squared |

\({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=\mu ^{-2}\,\!}\) |

\({\displaystyle \mu =(\mathbf {X} {\boldsymbol {\beta }})^{-1/2}\,\!}\) |

|

| Poisson | integer: \({\displaystyle 0,1,2,\ldots }\) |

count of occurrences in fixed amount of time/space | Log | \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=\ln(\mu )\,\!}\) |

\({\displaystyle \mu =\exp(\mathbf {X} {\boldsymbol {\beta }})\,\!}\) |

| Bernoulli | integer: \({\displaystyle \{0,1\}}\) |

outcome of single yes/no occurrence | Logit | \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=\ln \left({\frac {\mu }{1-\mu }}\right)\,\!}\) |

\({\displaystyle \mu ={\frac {\exp(\mathbf {X} {\boldsymbol {\beta }})}{1+\exp(\mathbf {X} {\boldsymbol {\beta }})}}={\frac {1}{1+\exp(-\mathbf {X} {\boldsymbol {\beta }})}}\,\!}\) |

| Binomial | integer: \({\displaystyle 0,1,\ldots ,N}\) |

count of # of "yes" occurrences out of N yes/no occurrences | \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=\ln \left({\frac {\mu }{n-\mu }}\right)\,\!}\) |

||

| Categorical | integer: \({\displaystyle [0,K)}\) |

outcome of single K-way occurrence | \({\displaystyle \mathbf {X} {\boldsymbol {\beta }}=\ln \left({\frac {\mu }{1-\mu }}\right)\,\!}\) |

||

K-vector of integer: \({\displaystyle [0,1]}\)![{\displaystyle [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d) , where exactly one element in the vector has the value 1 , where exactly one element in the vector has the value 1 |

|||||

| Multinomial | K-vector of integer: \({\displaystyle [0,N]}\)![{\displaystyle [0,N]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/703d57dca548a7f9d927247c2a27b67666aebdd5) |

count of occurrences of different types (1, ..., K) out of N total K-way occurrences |

Three Parts of a Generalized Linear Model

Link Function \(g(\cdot)\) (main difference from GLM):

How a non-normal outcome gets transformed into something we can predict that is more continuous (unbounded)

For outcomes that are already normal, general linear models are just a special case with an “identity” link function (Y * 1)

Model for the Means (“Structural Model”):

How predictors linearly related to the link-transformed outcome

- Or predictor non-linearly related to the original scale of outcome

New link-transformed \(Y_p = \color{royalblue}{g^{-1}} (\color{tomato}{\mathbf{\beta_0}} + \color{tomato}{\mathbf{\beta_1}}X_p + \color{tomato}{\mathbf{\beta_2}} Z_p + \color{tomato}{\mathbf{\beta_3}} X_pZ_p)\)

Model for the Variance (“Sampling/Stochastic Model”):

- If the errors aren’t normally distributed, then what are they?

- Family of alternative distributions at our disposal that map onto what the distribution of errors could possibly look like

- In logistic regression, you often hear sayings like “no error term exists” or “the error term has a binomial distribution”

Link Functions: How Generalized Models Work

Generalized models work by providing a mapping of the theoretical portion of the model (the right hand side of the equation) to the sample space of the outcome (the left hand side of the equation)

- The mapping is done by a feature called a link function

The link function is a non-linear function that takes the linear model predictors, random/latent terms, and constants and puts them onto the space of the outcome observed variables

Link functions are typically expressed for the mean of the outcome variable (we will only focus on that)

- In generalized models, the error variance is often a function of the mean, so no additive information exists if estimating the error term

Link Functions in Practice

- The link function expresses the conditional value of the mean of the outcome

\[ E(Y_p) = \hat{Y}_p = \mu_y \]

where \(E(\cdot)\) stands for expectation.

… through a non-linear link function \(g(\hat Y_p)\) when used on conditional mean of outcome

or its inverse link function \(g^{-1}(\mathbf{\beta X})\) when used on linear combination of predictors

The general form is:

\[ E(Y_p) =\hat{Y}_p = \mu_y =\color{royalblue}{g^{-1}}(\color{tomato}{\beta_0+\beta_1X_p+\beta_2Z_p+\beta_3X_pZ_p}) \]

The red part is the linear combination of predictors and their effects.

Why normal GLM is one type of Generalized Linear Model

- Our familiar general linear model is actually a member of the generalized model family (it is subsumed)

- The link function is called the identity, the linear predictor is unchanged

- The normal distribution has two parameters, a mean \(\mu\) and a variance \(\sigma^2\)

- Unlike most distributions, the normal distribution parameters are directly modeled by the GLM

- In conditionally normal GLMs, the inverse link function is called the identity function:

\[ g^{-1}(\cdot) = \boldsymbol{I}(\cdot) = 1 * (\color{yellowgreen}{\text{linear combination of predictors}}) \]

The identity function does not alter the predicted values – they can be any real number

This matches the sample space of the normal distribution – the mean can be any real number

- The expected value of an outcome from the GLM is:

\[ \begin{align} \mathbb{E}(Y_p) =\hat{Y}_p = \mu_y &=\color{royalblue}{\boldsymbol{I}}(\color{yellowgreen}{\beta_0+\beta_1X_p+\beta_2Z_p+\beta_3X_pZ_p}) \\ &= \beta_0+\beta_1X_p+\beta_2Z_p+\beta_3X_pZ_p \end{align} \]

About the Variance of GLM

The other parameter of the normal distribution described the variance of an outcome – called the error (residual) variance

We found that the model for the variance for the GLM was:

\[ \begin{align} Var(Y_p) &= Var(\beta_0+\beta_1X_p+\beta_2Z_p+\beta_3X_pZ_p + \color{tomato}{e}_p ) \\ &= Var(e_p) \\ &= \sigma^2_e \end{align} \]

- Similarly, this term directly relates to the variance of the outcome in the normal distribution

- We will quickly see distributions from other families (i.e., logistic ) where this doesn’t happen

- Error terms are independent of predictors

Generalized Linear Models For Binary Data

Today’s Data Example

- To help demonstrate generalized models for binary data, we borrow from an example listed on the UCLA ATS website.

- The data can be used when you upgraded the

ESRM64503package - Data come from a survey of 400 college juniors looking at factors that influence the decision to apply to graduate school:

- Y (outcome): student rating of likelihood he/she will apply to grad school (0 = unlikely; 1 = somewhat likely; 2 = very likely)

- We will first look at Y for two categories (0 = unlikely; 1 = somewhat or very likely) – we merged Cat1 and Cat2 into one category for illustration

- You wouldn’t do this in practice (use a different distribution for 3 categories)

- ParentEd: indicator (0/1) if one or more parent has graduate degree

- Public: indicator (0/1) if student attends a public university

- GPA: grade point average on 4 point scale (4.0 = perfect)

- Y (outcome): student rating of likelihood he/she will apply to grad school (0 = unlikely; 1 = somewhat likely; 2 = very likely)

Descriptive Statistics for Data

Code

library(ESRM64503)

library(tidyverse)

library(kableExtra)

Desp_GPA <- dataLogit |>

summarise(

Variable = "GPA",

N = n(),

Mean = mean(GPA),

`Std Dev` = sd(GPA),

Minimum = min(GPA),

Maximum = max(GPA)

)

Desp_Apply <- dataLogit |>

group_by(APPLY) |>

summarise(

Frequency = n(),

Percent = n() / nrow(dataLogit) * 100

) |>

ungroup() |>

mutate(

`Cumulative Frequency` = cumsum(Frequency),

`Cumulative Percent` = cumsum(Percent)

)

Desp_LLApply <- dataLogit |>

group_by(LLAPPLY) |>

summarise(

Frequency = n(),

Percent = n() / nrow(dataLogit) * 100

) |>

ungroup() |>

mutate(

`Cumulative Frequency` = cumsum(Frequency),

`Cumulative Percent` = cumsum(Percent)

)

Desp_PARED <- dataLogit |>

group_by(PARED) |>

summarise(

Frequency = n(),

Percent = n() / nrow(dataLogit) * 100

) |>

ungroup() |>

mutate(

`Cumulative Frequency` = cumsum(Frequency),

`Cumulative Percent` = cumsum(Percent)

)

Desp_PUBLIC <- dataLogit |>

group_by(PUBLIC) |>

summarise(

Frequency = n(),

Percent = n() / nrow(dataLogit) * 100

) |>

ungroup() |>

mutate(

`Cumulative Frequency` = cumsum(Frequency),

`Cumulative Percent` = cumsum(Percent)

)| APPLY | Frequency | Percent | Cumulative Frequency | Cumulative Percent |

|---|---|---|---|---|

| 0 | 220 | 55 | 220 | 55 |

| 1 | 140 | 35 | 360 | 90 |

| 2 | 40 | 10 | 400 | 100 |

| LLAPPLY | Frequency | Percent | Cumulative Frequency | Cumulative Percent |

|---|---|---|---|---|

| 0 | 220 | 55 | 220 | 55 |

| 1 | 180 | 45 | 400 | 100 |

| Variable | N | Mean | Std Dev | Minimum | Maximum |

|---|---|---|---|---|---|

| GPA | 400 | 2.998925 | 0.3979409 | 1.9 | 4 |

| PARED | Frequency | Percent | Cumulative Frequency | Cumulative Percent |

|---|---|---|---|---|

| 0 | 337 | 84.25 | 337 | 84.25 |

| 1 | 63 | 15.75 | 400 | 100.00 |

| PUBLIC | Frequency | Percent | Cumulative Frequency | Cumulative Percent |

|---|---|---|---|---|

| 0 | 343 | 85.75 | 343 | 85.75 |

| 1 | 57 | 14.25 | 400 | 100.00 |

What If We Used a Normal GLM for Binary Outcomes?

- If \(Y_p\) is a binary (0 or 1) outcome

- Expected mean is proportion of people who have a 1 (or “p”, the probability of Y_p = 1 in the sample)

- The probability of having a 1 is what we’re trying to predict for each person, given the values of his/her predictors

- General linear model: \(Y_p = I(\beta_0 + \beta_1x_p + \beta_2z_p + e_p)\)

- \(\color{tomato}{\beta}_0\) = expected probability when all predictors are 0

- \(\color{tomato}{\beta}_s\) = expected change in probability for a one-unit change in the predictor

- \(\color{royalblue}{e}_p\) = difference between observed and predicted values

- Generalized Linear Model becomes \(Y_p = \color{tomato}{(\text{predicted probability of outcome equal to 1})} + e_p\)

A General Linear Model of Predicting Binary Outcomes?

But if \(Y_p\) is binary and link function is identity link, then \(e_p\) can only be 2 things:

\(e_p\) = \(Y_p - \hat{Y}_p\)

If \(Y_p = 0\) then \(e_p\) = (0 - predicted probability)

If \(Y_p = 1\) then \(e_p\) = (1 - predicted probability)

The mean of errors would still be 0 … by definition

But variance of errors can’t possibly be constant over levels of X like we assume in general linear models

The mean and variance of a binary outcome are dependent!

As shown shortly, mean = p and variance = p * (1 - p), so they are tied together

This means that because the conditional mean of Y (p, the predicted probability Y = 1) is dependent on X, then so is the error variance

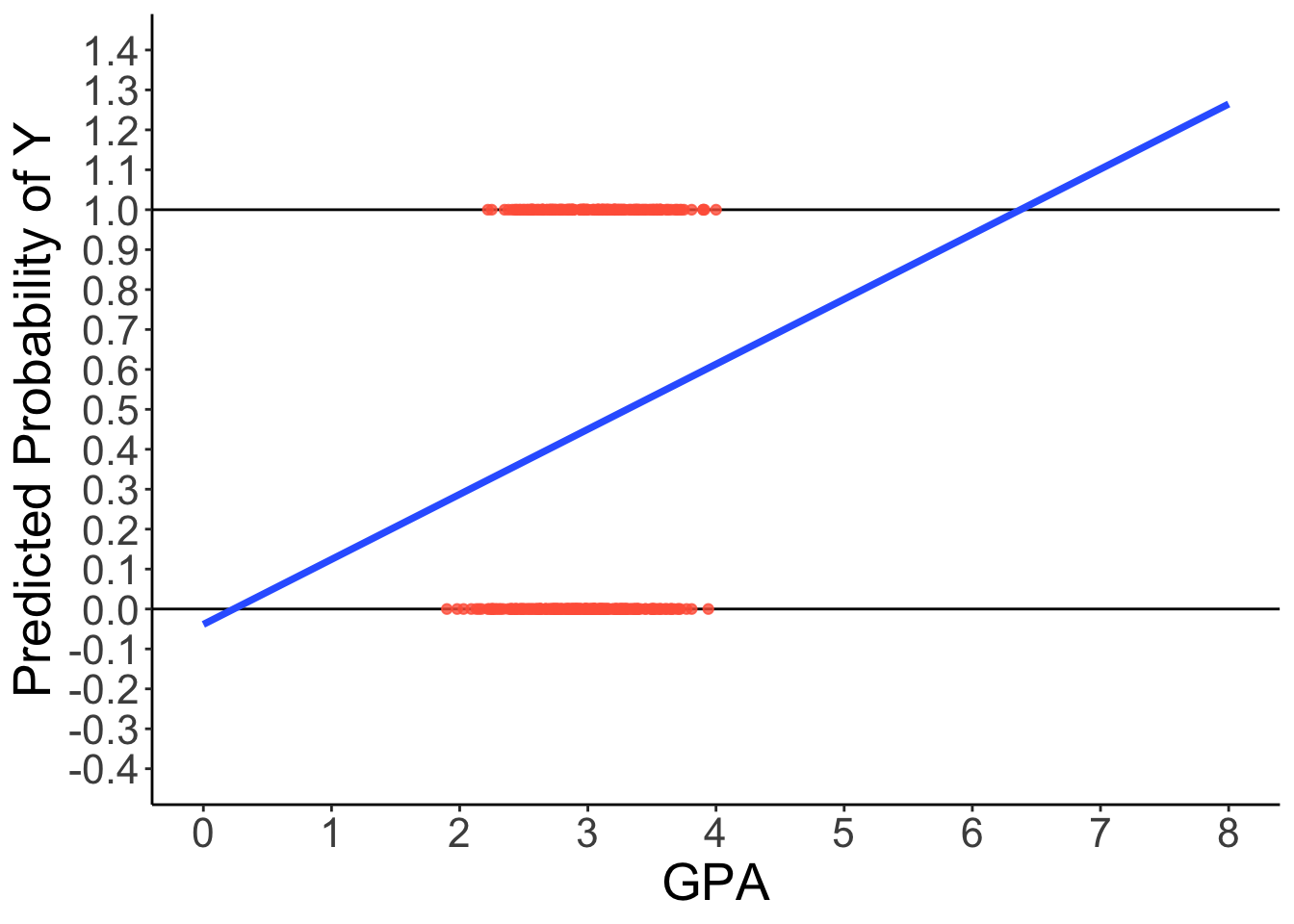

A General Linear Model With Binary Outcomes?

How can we have a linear relationship between X & Y?

Probability of a 1 is bounded between 0 and 1, but predicted probabilities from a linear model aren’t bounded

- Impossible values

Linear relationship needs to ‘shut off’ somehow \(\rightarrow\) made nonlinear

Predicted Regression Line of GLM

Code

library(ggplot2)

ggplot(dataLogit) +

aes(x = GPA, y = LLAPPLY) +

geom_hline(aes( yintercept = 1)) +

geom_hline(aes( yintercept = 0)) +

geom_point(color = "tomato", alpha = .8) +

geom_smooth(method = "lm", se = FALSE, fullrange = TRUE, linewidth = 1.3) +

scale_x_continuous(limits = c(0, 8), breaks = 0:8) +

scale_y_continuous(limits = c(-0.4, 1.4), breaks = seq(-0.4, 1.4, .1)) +

labs(y = "Predicted Probability of Y") +

theme_classic() +

theme(text = element_text(size = 20))

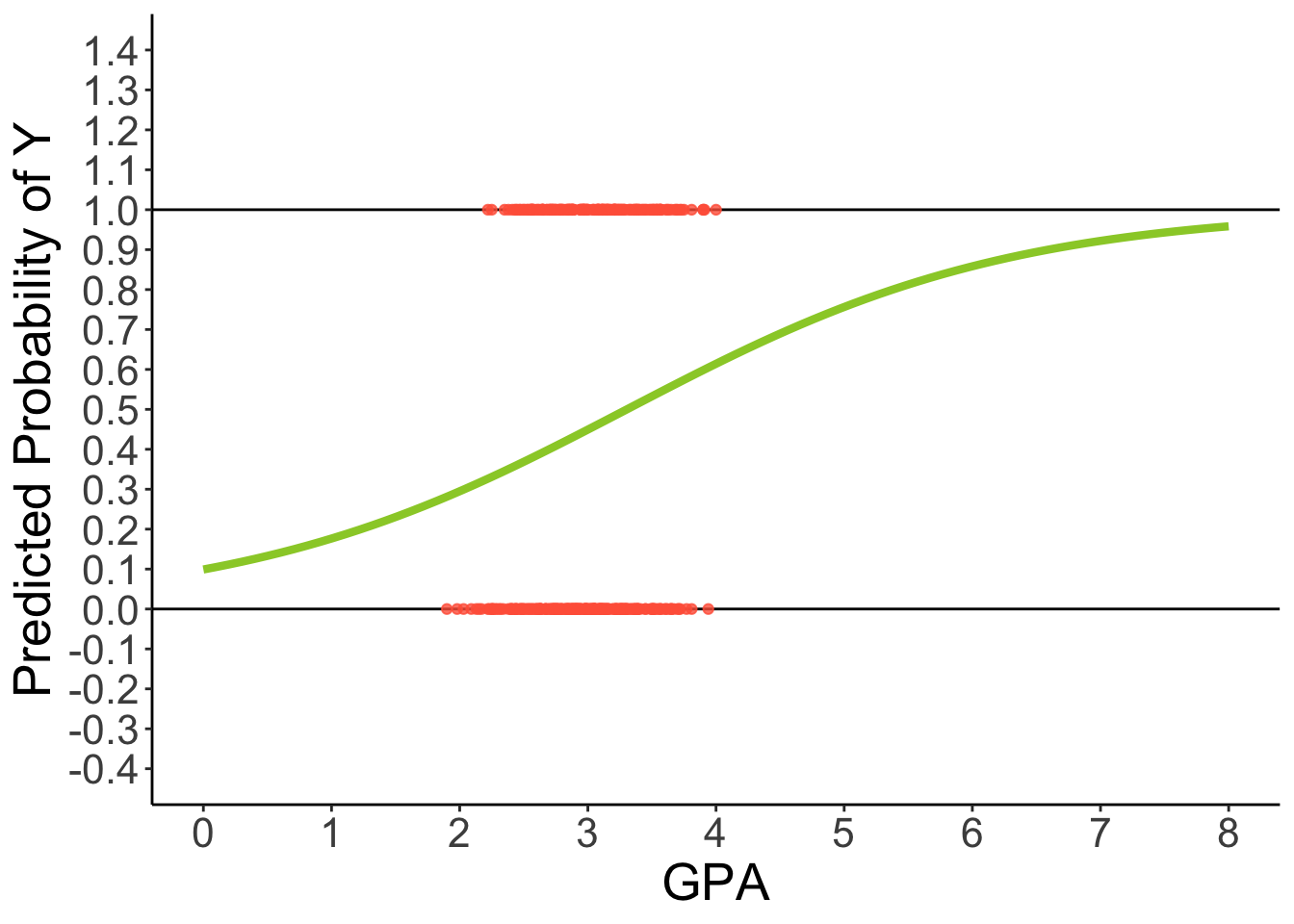

Predicted Regression Line of Logistic Regression

Code

ggplot(dataLogit) +

aes(x = GPA, y = LLAPPLY) +

geom_hline(aes( yintercept = 1)) +

geom_hline(aes( yintercept = 0)) +

geom_point(color = "tomato", alpha = .8) +

geom_smooth(method = "glm", se = FALSE, fullrange = TRUE,

method.args = list(family = binomial(link = "logit")), color = "yellowgreen", linewidth = 1.5) +

scale_x_continuous(limits = c(0, 8), breaks = 0:8) +

scale_y_continuous(limits = c(-0.4, 1.4), breaks = seq(-0.4, 1.4, .1)) +

labs(y = "Predicted Probability of Y") +

theme_classic() +

theme(text = element_text(size = 20))

3 Problems with GLM predicting binary outcomes

Assumption Violation problem: GLM for continuous, conditionally normal outcome = residuals can’t be normally distributed

Restricted Range problem (e.g., 0 to 1 for outcomes)

Predictors should not be linearly related to observed outcome

- Effects of predictors need to be ‘shut off’ at some point to keep predicted values of binary outcome within range

Decision Making Problem: for GLM, the predicted value will a continuous predicted value with same scale of outcome (Y), how do we answer the question such as whether or not students will apply given certain value of GPA

- But for Generalized Linear model, we can say students will have 50% probability of applying

The Binary Outcome: Bernoulli Distribution

Bernoulli distribution has following properties

Notation: \(Y_p \sim B(\boldsymbol{p}_p)\) (where p is the conditional probability of a 1 for person p)

Sample Space: \(Y_p \in \{0, 1\}\) (\(Y_p\) can either be a 0 or a 1)

Probability Density Function (PDF):

- \[ f(Y_p) = (\mathbf{p}_p)^{Y_p}(1-\mathbf{p}_p)^{1-Y_p} \]

Expected value (mean) of Y: \(E(y_p) = \mu_{Y_p}=\boldsymbol{p}_p\)

Variance of Y: \(Var(Y_p) = \sigma^2_{Y_p} = \boldsymbol{p}_p ( 1- \boldsymbol{p}_p )\)

Note

\(\boldsymbol{p}_p\) is the only parameter – so we only need to provide a link function for it …

Generalized Models for Binary Outcomes

Rather than modeling the probability of a 1 directly, we need to transform it into a more continuous variable with a link function, for example:

We could transform probability into an odds ratio

Odds ratio (OR): \(\frac{p}{1-p} = \frac{Pr(Y = 1)}{Pr(Y = 0)}\)

For example, if \(p = .7\), then OR(1) = 2.33; OR(0) = .429

Odds scale is way skewed, asymmetric, and ranges from 0 to \(+\infty\)

- This is not a helpful property

Take natural log of odds ratio \(\rightarrow\) called “logit” link

\(\text{logit}(p) = \log(\frac{p}{1-p})\)

For example, \(\text{logit}(.7) = .846\) and \(\text{logit}(.3) = -.846\)

Logit scale is now symmetric at \(p = .5\)

The logit link is one of many used for the Bernoulli distribution

- Names of others: Probit, Log-Log, Complementary Log-Log

More Details about Logit Transformation

The link function for a logit is defined by:

\[ g(\mathbb{E}(Y)) = \log(\frac{P(Y=1)}{1-P(Y=1)}) = \beta X^T \qquad(1)\]

where \(g\) called link function, \(\mathbb{E}(Y)\) is the expectation of Y, \(\beta X^T\) is the linear predictor

A logit can be translate back to a probability with some algebra:

\[ P(Y=1) = \frac{\exp(\beta X^T)}{1+\exp(\beta X^T)} = \frac{\exp(\beta_0 + \beta_1 X + \beta_2Z + \beta_3XZ)}{1+\exp(\beta_0 + \beta_1 X + \beta_2Z + \beta_3XZ)} \\ = (1+\exp(-1*(\beta_0 + \beta_1 X + \beta_2Z + \beta_3XZ)))^{-1} \qquad(2)\]

From Equation 1 and Equation 2, we can know that \(g(\mathbb{E}(Y))\) has a range of [-\(\infty\), +\(\infty\)], P(Y = 1) has a range of [0, 1].

Interpretation of Coefficients

# function to translate OR to Probability

OR_to_Prob <- function(OR){

p = OR / (1+OR)

return(p)

}

# function to translate Logit to Probability

Logit_to_Prob <- function(Logit){

OR <- exp(Logit)

p = OR_to_Prob(OR)

return(p)

}

Logit_to_Prob(Logit = -2.007) # p = .118[1] 0.1184699[1] 0.1343912Intercept \(\beta_0\):

Logit: We can say the predicted logit value of Y = 1 for an individual when all predictors are zero; i.e., the average logit is -2.007

Probability: Alternatively, we can say the expected value of probability of Y = 1 is \(\frac{\exp(\beta_0)}{1+\exp(\beta_0)}\) when all predictors are zero; i.e., the average probability of applying to grad score is 0.1184699

Odds Ratio: Alternatively, we can say the expected odds ratio (OR) of probability of Y = 1 is \(\exp(\text{Logit})\) when all predictors are zero; the average odds (ratio) of the probability of applying to grad school is exp(-2.007) = 0.13439

Slope \(\beta_1\):

Logit: We can say the predicted increase of logit value of Y = 1 with one-unit increase of X;

Probability: We can say the expected increase of probability of Y = 1 is \(\frac{\exp(\beta_0+\beta_1)}{1+\exp(\beta_0+\beta_1)}-\frac{\exp(\beta_0)}{1+\exp(\beta_0)}\) with one-unit increase of X; Note that the increase (\(\Delta(\beta_0, \beta_1)\)) is non-linear and dynamic given varied value of X.

Odds Ratio: We can say the expected odds ratio (OR) of probability of Y = 1 is \(\exp(\beta_1)\) times larger with one-unit increase of X. (hint: the new odds ratio is \(\exp(\beta_0+\beta_1) = \exp(\beta_0)\exp(\beta_1)\) when X = 1 and the old odds ratio is \(\exp(\beta_0)\) when X = 0)

Example: Fitting The Models

Model 0: The empty model for logistic regression with binary variable (applying for grad school) as the outcome

Model 1: The logistic regression model including centered GPA and binary predictors (Parent has granduate degree, Student Attend Public University)

Model 0: The empty model

The statistical form of empty model:

\[ P(Y_p =1) = \frac{\exp(\beta_0)}{1+\exp(\beta_0)} \]

or

\[ \text{logit}(P(Y_p = 1)) = \beta_0 \]

Takehome Note

Many generalized linear models don’t list an error term in the statistical form. This is because the error has fixed mean and fixed variances.

For the logit function, \(e_p\) has a logistic distribution with a zero mean and a variance as \(\pi^2\)/3 = 3.29.

- Use

ordinalpackage andclm()function, we can model categorical dependent variables

library(ordinal)

# response variable must be a factor

1dataLogit$LLAPPLY = factor(dataLogit$LLAPPLY, levels = 0:1)

# Empty model: likely to apply

2model0 = clm(LLAPPLY ~ 1, data = dataLogit, control = clm.control(trace = 1))- 1

-

The dependent variable must be stored as a

factor - 2

-

the

formulaanddataarguments are identical tolm; Thecontrol =argument is only used here to show iteration history of the ML algorithm

iter: step factor: Value: max|grad|: Parameters:

0: 1.000000e+00: 277.259: 2.000e+01: 0

nll reduction: 2.00332e+00

1: 1.000000e+00: 275.256: 6.640e-02: 0.2

nll reduction: 2.22672e-05

2: 1.000000e+00: 275.256: 2.222e-06: 0.2007

nll reduction: 3.97904e-13

3: 1.000000e+00: 275.256: 2.307e-14: 0.2007

Optimizer converged! Absolute and relative convergence criteria were metModel 0: Result

formula: LLAPPLY ~ 1

data: dataLogit

link threshold nobs logLik AIC niter max.grad cond.H

logit flexible 400 -275.26 552.51 3(0) 2.31e-14 1.0e+00

Threshold coefficients:

Estimate Std. Error z value

0|1 0.2007 0.1005 1.997The

clmfunction output Threshold parameter (labelled as \(\tau_0\)) rather than intercept (\(\beta_0\)).The relationship between \(\beta_0\) and \(\tau_0\) is \(\beta_0 = - \tau_0\)

Thus, the estimated \(\beta_0\) is -0.2007 with SE = 0.1005 for model 0

The predicted logit is -0.2007; the predicted probability is .55

The log-likelihood is -275.26; AIC is 552.51

Model 1: The conditional model

\[ P(Y_p = 1) = \frac{\exp(\beta_0 + \beta_1PARED_p + \beta_2 (GPA_p-3) +\beta_3 PUBLIC_p)}{1 + \exp(\beta_0 + \beta_1PARED_p + \beta_2 (GPA_p-3) +\beta_3 PUBLIC_p)} \]

or

\[ \text{logit}(P(Y_p =1)) = \beta_0 + \beta_1PARED_p + \beta_2 (GPA_p-3) +\beta_3 PUBLIC_p \]

dataLogit$GPA3 <- dataLogit$GPA - 3

model1 = clm(LLAPPLY ~ PARED + GPA3 + PUBLIC, data = dataLogit)

summary(model1)formula: LLAPPLY ~ PARED + GPA3 + PUBLIC

data: dataLogit

link threshold nobs logLik AIC niter max.grad cond.H

logit flexible 400 -264.96 537.92 3(0) 3.71e-07 1.0e+01

Coefficients:

Estimate Std. Error z value Pr(>|z|)

PARED 1.0596 0.2974 3.563 0.000367 ***

GPA3 0.5482 0.2724 2.012 0.044178 *

PUBLIC -0.2006 0.3053 -0.657 0.511283

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Threshold coefficients:

Estimate Std. Error z value

0|1 0.3382 0.1187 2.849Model 1: Results

LL(Model 1) = -264.96 (LL(Model 0) = -275.26)

\(\beta_0 = -\tau_0 = 0.3382 (0.1187)\)

\(\beta_1 (SE) = 1.0596 (0.2974)\) with \(p < .05\)

\(\beta_2 (SE) = 0.5482 (0.2724)\) with \(p < .05\)

\(\beta_3 (SE) = -0.2006 (0.3053)\) with \(p = .511\)

Understand the results

Question #1: does Model 1 fit better than the empty model (Model 2)?

This question is equivalent to test the following hypothesis:

\[ H_0: \beta_0=\beta_1=\beta_2=0\\ H_1: \text{At least one not equal to 0} \]

We can use Likelihood Ratio Test:

\[ -2\Delta = (-275.26 - (-264.96)) = 20.586 \]

DF = 4 (# of params of Model 0) - 1 (# of params of Model 1) = 3

p-value: p = .0001283

Likelihood ratio tests of cumulative link models: formula: link: threshold: model0 LLAPPLY ~ 1 logit flexible model1 LLAPPLY ~ PARED + GPA3 + PUBLIC logit flexible no.par AIC logLik LR.stat df Pr(>Chisq) model0 1 552.51 -275.26 model1 4 537.92 -264.96 20.586 3 0.0001283 *** --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1# Or we can use chi-square distribution to calculate p-value as.numeric(pchisq(-2 * (logLik(model0)-logLik(model1)), 3, lower.tail = FALSE))[1] 0.0001282981Conclusion: reject \(H_0\) and we preferred to the empty model

- Question #2: Whether the effects of GPA, PARED, PUBLIC are significant or not?

Intercept \(\beta_0 = -0.3382 (0.1187)\):

Logit: the predicted logit of probability of applying for the grad school is -0.3382 for a person with 3.0 GPA, parents without a graduate degree, and at a private university

Odds Ratio: the predicted OR of applying for the grad school is \(0.7130\) for a person with 3.0 GPA, parents without a graduate degree, and at a private university (OR < 1: the probability of applying is less than the probability of not applying)

Probability: the predicted probability of applying for the grad school is 41.7% for a person with 3.0 GPA, parents without a graduate degree, and at a private university

Slope of parents having a graduate degree: \(\beta_1 (SE) = 1.0596 (0.2974)\) with \(p < .05\)

Logit: the predicted logit of applying for the grad school will increase 1.0596 for whose parents having a graduate degree controlling other predictors.

Odds Ratio: the predicted OR will increase from 0.7139 to 2.05 for whose parents having a graduate degree controlling other predictors – students who have parents with a graduate degree has 3x the odds of rating the item with a “likely to apply”

Probability: Compared to those without parental graduate degree, the predicted probability of “likely to apply” for students with parental graduate degree increases from 0.416 to .673

\[ \frac{\exp(\beta_0+\beta_1)}{1+ \exp(\beta_0+\beta_1)}= .673 \]

\[ \frac{\exp(\beta_0)}{1+ \exp(\beta_0)}= .416 \]

Slope of students in public vs. private universities: \(\beta_3 (SE) = -0.2006 (0.3053)\) with \(p = .511\)

## Interpret output

OR_p <- function(logit_old, logit_new){

data.frame(

OR_old = exp(logit_old),

OR_new = exp(logit_new),

p_old = exp(logit_old) / (1 + exp(logit_old)),

p_new = exp(logit_new) / (1 + exp(logit_new))

)

}

beta_0 <- -0.3382

beta_3 <- -0.2006

Result <- OR_p(logit_old = beta_0, logit_new = (beta_0 + beta_3))

Result |> show_table()| OR_old | OR_new | p_old | p_new |

|---|---|---|---|

| 0.7130527 | 0.583448 | 0.4162468 | 0.3684668 |

[1] 0.8182397[1] -0.04778001Interpretation

Students has 0.81 times odds ratio of applying for grad.school when they are in public universities

The probability of applying for grad. school increases 4.78%.

This change in odds ratio and probability of applying for grad. school is not significant.

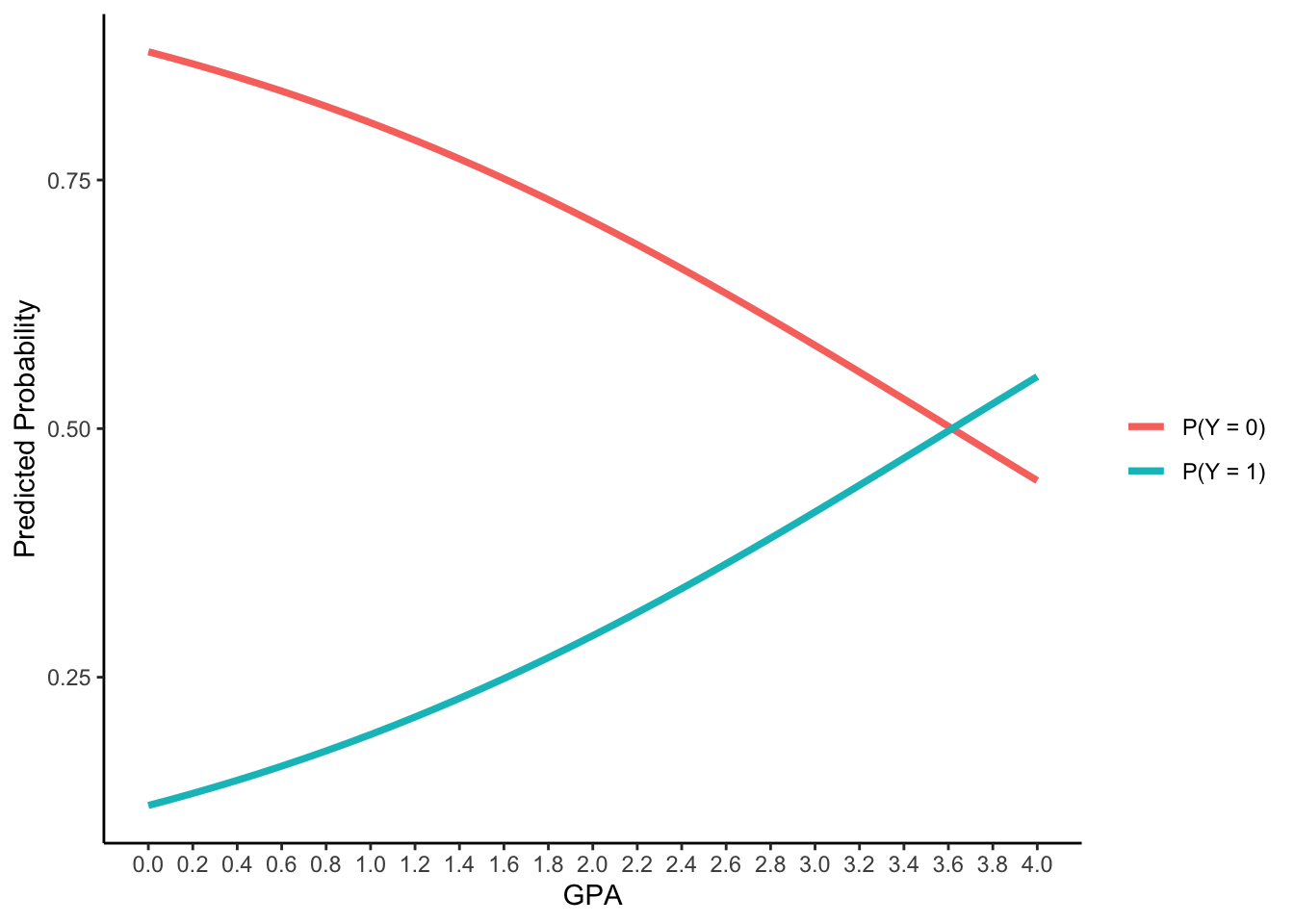

Slope of GPA3: \(\beta_2(SE) = 0.5482 (0.2724)\) with \(p < .05\)

Interpretation

- For every one-unit increase in GPA, the logit of applying for grad. school will increase 0.548, the odds ratio will be 1.73 times, the probabilities will be 19.2%, 29.2%, 41.6% to 55.2% for GPA = 1 , 2, 3 and 4

new_data <- data.frame(

GPA3 = seq(-3, 1, .1),

PARED = 0,

PUBLIC = 0

)

Pred_prob <- predict(model1, newdata=new_data)$fit

as.data.frame(cbind(GPA = new_data$GPA3+3, P_Y_0 = Pred_prob[,1], P_Y_1 = Pred_prob[,2])) |>

pivot_longer(starts_with("P_Y")) |>

ggplot() +

aes(x = GPA, y = value) +

geom_path(aes(group = name, color = name), linewidth = 1.3) +

labs(y = "Predicted Probability") +

scale_x_continuous(breaks = seq(0, 4, .2)) +

scale_color_discrete(labels = c("P(Y = 0)", "P(Y = 1)"), name = "") +

theme_classic()

Takehome Note

- For logistic models with two responses:

- Regression weights are now for LOGITS

- The direction of what is being modeled has to be understood (Y = 0 or = 1)

- The change in odds and probability is not linear per unit change in the IV, but instead is linear with respect to the logit

- Interactions will still

- Will still modify the conditional main effects

- Simple main effects are effects when interacting variables = 0

Wrap up

Generalized linear models are models for outcomes with distributions that are not necessarily normal

The estimation process is largely the same: maximum likelihood is still the gold standard as it provides estimates with understandable properties

Learning about each type of distribution and link takes time:

- They all are unique and all have slightly different ways of mapping outcome data onto your model

Logistic regression is one of the more frequently used generalized models – binary outcomes are common

ESRM 64503 - Lecture 06: Matrix Algebra